%20(3).png)

.png)

In my time running communities, 1:1 matching has always been one of my favorite programs. At their core, professional communities are about meeting people and building a network. Casual virtual coffee chats are one of the best ways to do that. We hear from many members that 1:1s are one of their favorite programs, because:

Superpath Pro members have shared how these chats have sparked many years-long relationships. Because of the power of the program, the Superpath team is continuing to invest in the product.

For most of Superpath’s history, 1:1s were random connections. Superpath Pro members opted in each month. We paired them with another member of the community. There was an ongoing avoid list to ensure pairs didn’t repeat, but that was the only logic.

The program was easy to run because of some cool automations that Jimmy and Eric created in Airtable. But there were a few ongoing issues with the experience.

Superpath is a community of folks with a diverse range of roles across the world of content marketing — from in-house to freelance, to agency. Some manage people, some are solo. Some are earlier in their career, some are very experienced. The variety of backgrounds produces a dynamic Slack community, but don't always make for the optimal 1:1 pairings.

We matched people, set up an intro email, and then it was up to the community members to make it happen. We found out lots of pairs never met. The back and forth emails to find a time to talk drained the excitement of getting a new person to meet.

Community members still liked the v0 matching program, but we saw an opportunity to fix these recurring challenges we’d heard about.

In December 2025, we overhauled the matching program. We built a Claude prompt that factored in background, timezones, and connection requests. We also started requiring all participants to provide a personal calendar link.

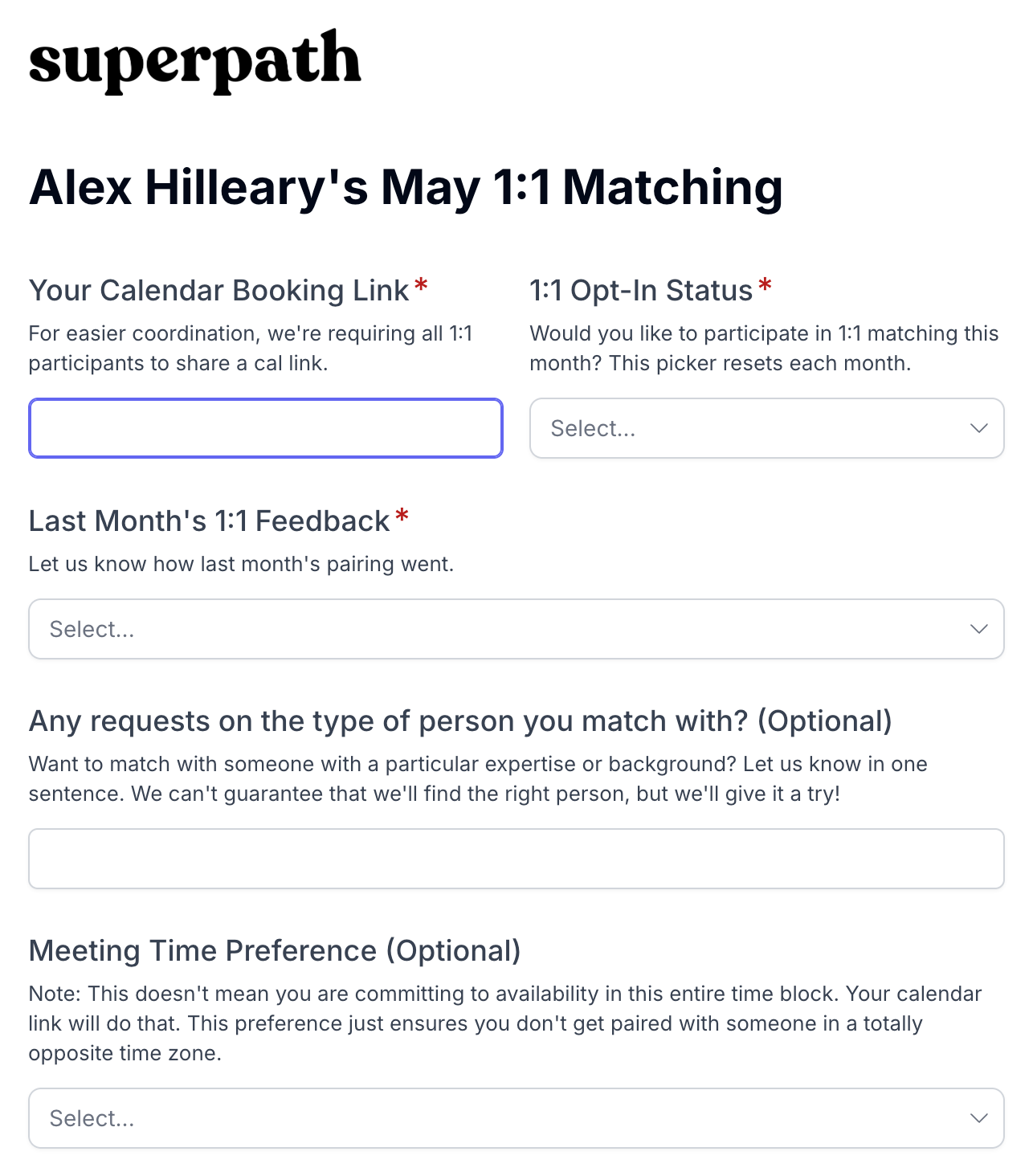

When someone joins Superpath Pro, we gather public information about the person’s role, job, and background from LinkedIn. Each month, when a member opts in, they get the chance to update that information. We also ask for timezone preferences, and specific connection requests.

Once everyone opts in for the month, our pool of matches is set.

While we make a few small tweaks to the matching algorithm each month, the prompt we give Claude generally looks something like this (simplified for readability):

Hard rules:

Never pair two people who have been matched beforeRespect avoid listsSoft rules (in priority order):

Fill connection requests. Read each person's request and try to match them with someone who fitsMatch timezonesMatch backgrounds (similar tags, industries, roles)Prioritize people who got ghosted or didn't get scheduled last monthThe other big change we made was requiring calendar links – Calendly, Google appointments, whatever, just something where the other person could book directly on a calendar. The change meant no more back and forth emails to coordinate.

In the intro email, this is the call to action:

Next Step

ABC's calendar link: https://calendly.com/ABC/30-minXYZs calendar link: https://calendar.app.google/XYZ

Whoever reads this first, please take a moment to schedule the 1:1 on the other person's calendar.

Hope it's a great conversation!

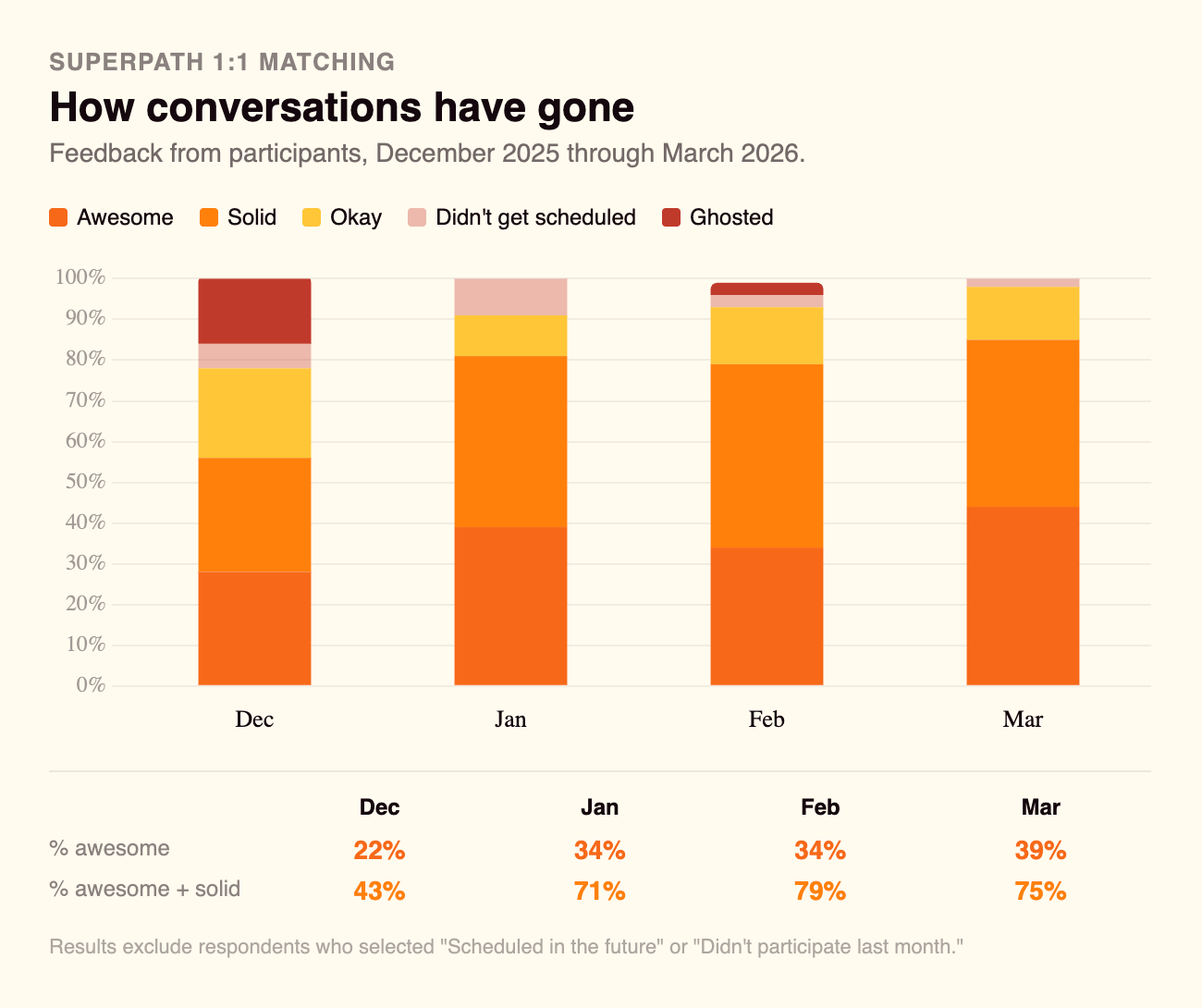

The response to the updates has been overwhelmingly positive. A handful of folks have sent nice notes about the change, and the quantitative feedback shows it too. Many people report Awesome and Solid conversations and way fewer ghosts.

While the program is improving, there’s still more work to do.

First, it’d be great to continue to improve the conversation quality and see an even higher percentage of folks reporting Awesome conversations.

Second, we need to continue to grow the pool of folks opting into the program. A side effect of enforcing calendar links and a few required fields is that we increased the friction to participate.

At peak in the randomized version of the matching program, we had 94 participants one month. With the newer version, we didn’t crack 70. Fresh opt-ins ensure there are always new people to talk to.

The answer to both of these issues – make the program even better.

When we launched the newer version, we added a field called connection request. In matching programs in the past, I’d never let people make specific requests, but I thought I’d give it a try to test how well AI could handle it.

This was the field prompt in the opt-in form:

Any requests on the type of person you match with? (Optional)

Want to match with someone with a particular expertise or background? Let us know in one sentence. We can't guarantee that we'll find the right person, but we'll give it a try!

Not everyone made connection requests, but the majority of folks did. In the most recent match, we had 61 participants, and 36 made connection requests. In all the matching batches, we hovered around that 60% mark.

Those requests vary in scope and specificity.

In our five months asking for requests, not a single person submitted a request that was unreasonable or in bad faith, but nonetheless, it wreaked havoc on the matching logic.

Here are how the buckets played out for this past round (similar to previous rounds):

Thirteen people asked for someone like them (in-house wanting in-house, freelancers wanting freelancers). These are the easiest matches and they're also the ones people rate highest.

This bucket is the most interesting, so bear with me on the longer explanation.

In-house people usually prefer to match with in-house people, but a lot of freelancers want to match with in-house folks. Make sense. Freelancers want to network more with people who could eventually hire them.

In the last match pool, we had:

16 total in-house folks.

10 freelancers requested an in-house person.

So, before factoring everything else in (timezone, avoid lists, etc), we have some issues. After matching the 8 in-house people who requested other in-house people we only had 8 in-house left for the 10 freelancers who requested them.

But more importantly, there’s a long term structural issue here.

If you're in-house and don't submit a specific request for another in-house person, you’re likely to get paired with a freelancer every time.

That's fine occasionally, but not a great long-term experience when you never get to talk to in-house peers.

Thirteen people asked for a specific topic, industry, or career stage. These can be tricky.

Some months the right person is in the pool. Some months they aren't.

Also, sometimes we just don’t have the data to know if that person is in the pool. Unless you’ve re-written your bio, your bio comes from your LinkedIn bio. We don’t capture the full set of skills people have.

If you requested someone who is really good at using Clickup, unless we happen to have a Clickup evangelist who put “Clickup skillz to the max” in their LinkedIn bio, we aren’t going to be helpful in finding you the right person.

The niche requests also havean outsized impact on the overall matching. Instead of optimizing for good matches for the whole pool, the logic spends all its time trying to match niche things.

In the end, roughly a third of niche and skill requests worked, a third were structurally un-fillable, and a third depended on luck. But trying to match the niche requests threw off the logic for everyone else.

To make the 1:1 program even better, we’re iterating again. The May 2026 matching program will introduce a new version of curated matching. We’re making a couple of major changes.

The reason I’ve always loved peer-to-peer connections is because they have a really high hit rate. When we pair two people with the same kind of role, experience, and company, there’s a really good chance they are working on the same things. Shared context creates good conversations.

Side note: If you’re a freelancer and you’re disappointed that you won’t be able to connect with in-house folks anymore in 1:1s, I get it. But, we have several things we’re working on to help improve connections between freelancers and in-house folks. That starts with a searchable member directory coming out in the next few months.

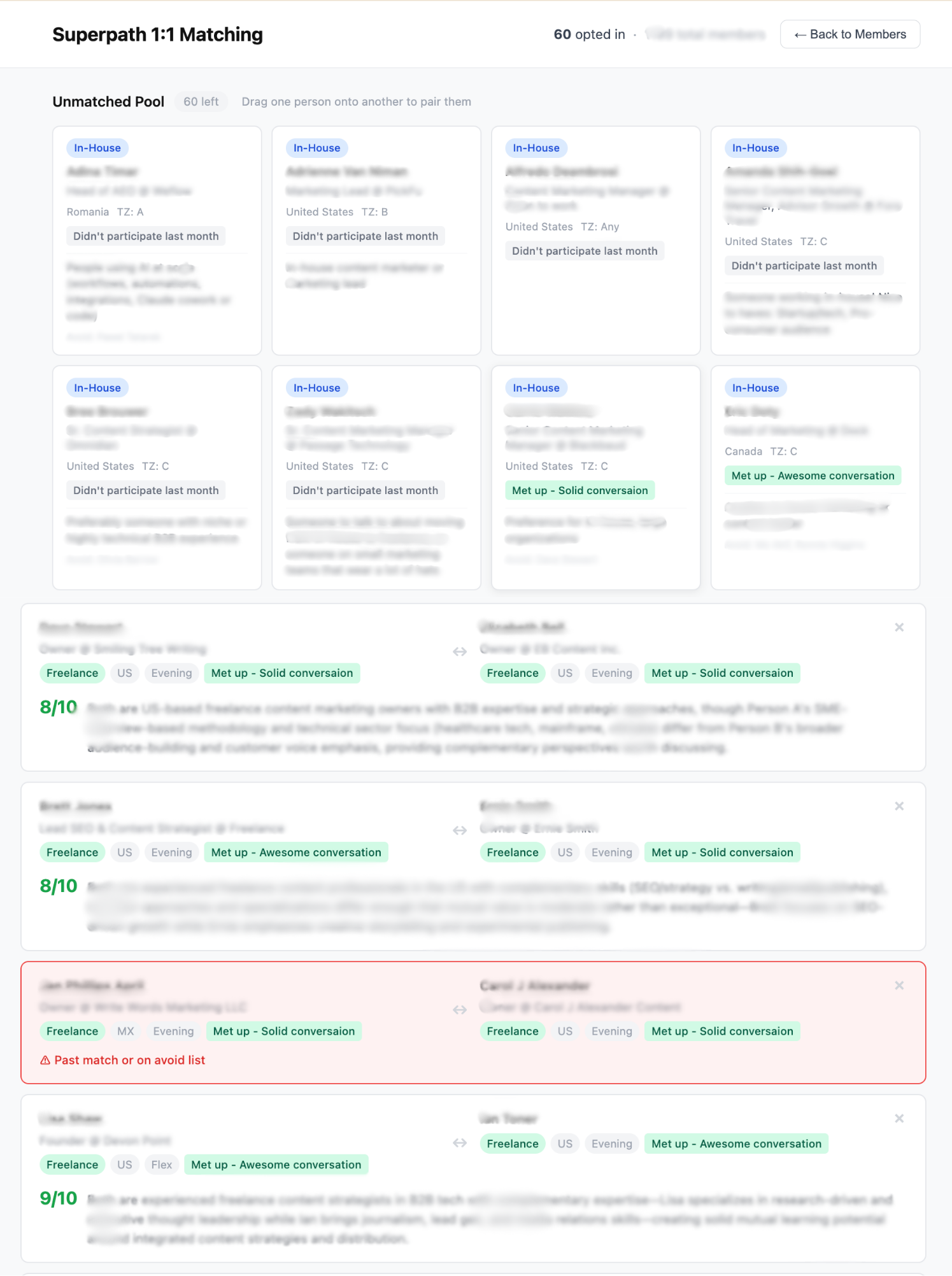

While the Claude prompt worked well, there’s a benefit to the Superpath team getting our hands a little dirty in the matching process. This month, we used simplified logic to create a scoring mechanism for any given match.

Instead of feeding the logic in as a prompt and getting a set of pairs as the output, we’re making the matches ourselves and having Claude score the pairs. We used Claude Code to create a drag and drop set of cards, which allows us to play around with the different pairs until we land on a set we’re really happy with.

What I really love about the human-in-the-loop process (we actually tested it already with the April batch) is that it allows us to say, “Oh look at these two. They haven’t connected before, but we really know they’d hit it off” in a way that AI never could on its own.

I’m really excited for the next iteration of this program.

If you’re a Superpath Pro member and have participated in our 1:1s, thank you! Keep the feedback coming. You can always DM me in Slack.

If you’re a Pro member that hasn’t tried 1:1s lately, there hasn’t been a better time.

And if you haven’t joined Superpath Pro yet, join us! This is just one of the many community programs that we’re designing intentionally. There’s a 30-day free trial, so you can even go through a 1:1 match before committing.

Happy matching!

- AH